What is Z-Image-Turbo?

Z-Image-Turbo is the distilled “Turbo” variant in the Z-Image family of image generation models. According to the official Tongyi‑MAI repository, Z-Image is a 6B‑parameter image generation family with multiple checkpoints covering generation, editing, and open‑weight development. Z-Image-Turbo is the high‑speed variant designed to achieve very low inference latency while still delivering high quality. It is targeted at creators and developers who need fast turnarounds without sacrificing photorealism or prompt fidelity.

Official documentation highlights that Z-Image-Turbo can match or exceed leading competitors using only 8 NFEs (Number of Function Evaluations). This makes it a rare model that combines speed and strong visual quality. It is also designed to fit within 16GB VRAM consumer devices, which expands its usability beyond high‑end data center GPUs.

Official architecture: S3‑DiT

The official Z-Image documentation describes the model architecture as S3‑DiT (Scalable Single‑Stream Diffusion Transformer). In this design, text tokens, visual semantic tokens, and image VAE tokens are concatenated into a single stream. This unified sequence improves parameter efficiency compared to dual‑stream approaches. The goal is to create a scalable, high‑capacity diffusion transformer that remains computationally efficient.

In practice, this architecture supports strong prompt adherence and fine‑grained control. Because text and visual tokens are co‑processed in a single stream, prompt structure has a more direct impact on visual composition. This is why Z-Image-Turbo responds well to concise, well‑structured prompts.

Key capabilities from official sources

The official model card and GitHub repository highlight several capabilities that define Z-Image-Turbo’s positioning. These are not general claims — they are explicit features listed by Tongyi‑MAI.

- Photorealistic image generation with strong aesthetic quality and detail.

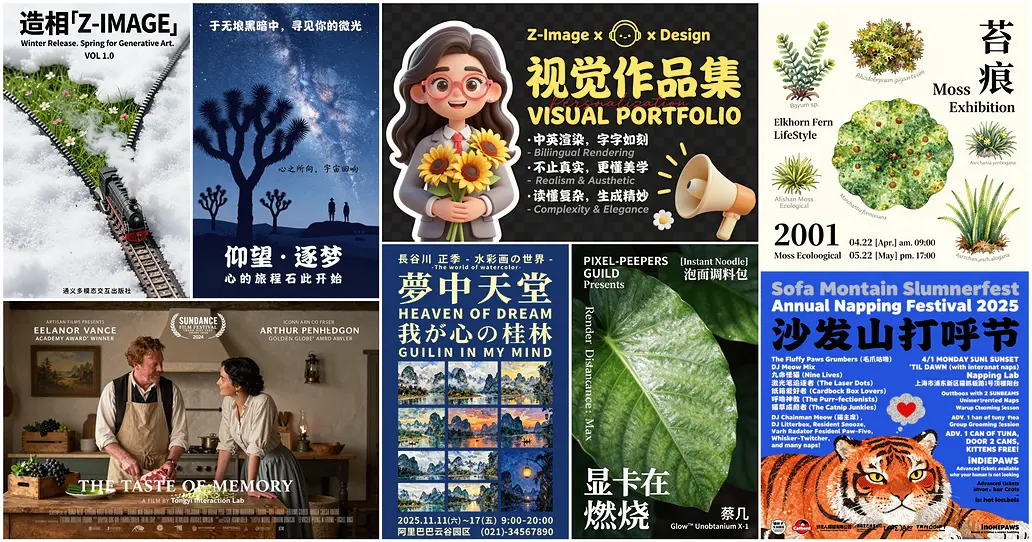

- Bilingual text rendering (English and Chinese) with improved legibility and accuracy.

- Robust instruction adherence, even with complex prompts.

- Sub‑second inference latency on H800 enterprise GPUs and compatibility with 16GB VRAM devices.

- Open‑weight availability under the Apache 2.0 license.

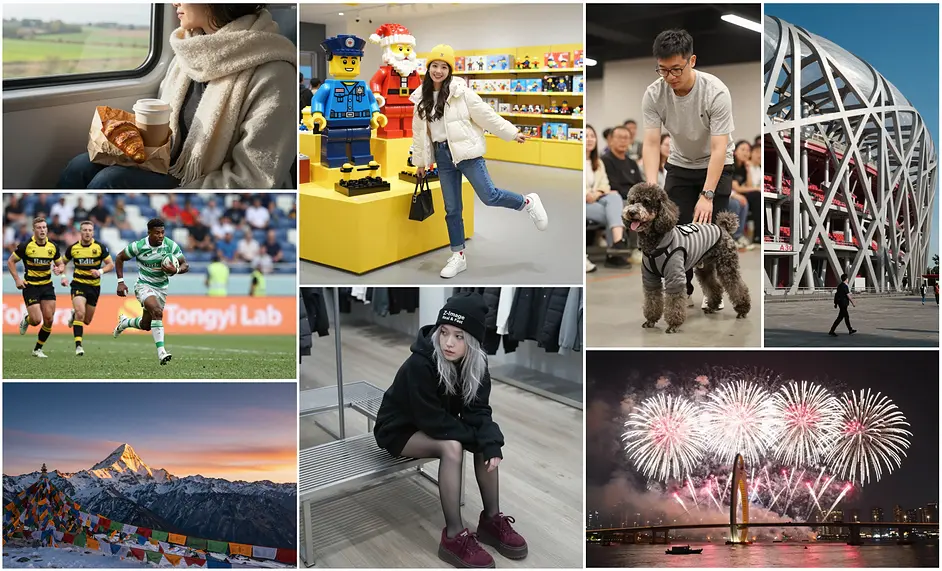

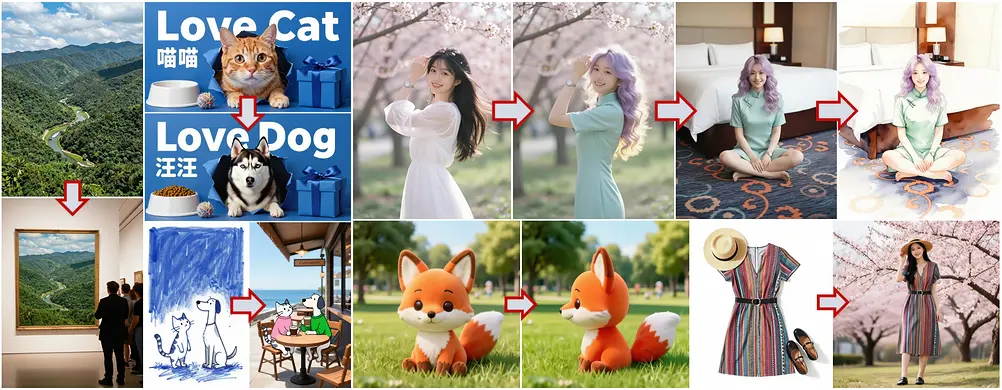

Official showcase images

The images below are official showcase outputs from the Z-Image-Turbo model card. They demonstrate a mix of photorealism, typography, and editing‑style transformations. While the original prompts are not published, these examples show the types of outputs that Z-Image‑Turbo is designed to deliver.

Parameter chart (official specs)

| Parameter | Official value |

|---|---|

| Model family | Z-Image |

| Parameters | 6B |

| Turbo steps | 8 NFEs |

| Latency target | Sub‑second on H800 |

| VRAM target | Fits in 16GB |

| Architecture | S3‑DiT (Scalable Single‑Stream DiT) |

| Text rendering | Bilingual (English & Chinese) |

| License | Apache 2.0 |

How Z-Image-Turbo compares to the rest of the Z-Image family

The official Z-Image repository describes four main checkpoints. Z-Image-Turbo is the distilled speed model; Z-Image is the high‑quality base model; Z-Image-Omni-Base is a generation+editing foundation checkpoint; and Z-Image-Edit is a dedicated editing model. Understanding these variants is key to choosing the right model for your workload.

| Model | Best for | Notes |

|---|---|---|

| Z-Image-Turbo | Fast, low‑latency generation | Distilled model with 8 NFEs and sub‑second latency. |

| Z-Image | Highest quality base generation | Designed for rich aesthetics, diversity, and fine‑tuning. |

| Z-Image-Omni-Base | Open foundation for gen + edit | Raw checkpoint for community fine‑tuning and customization. |

| Z-Image-Edit | Image editing workflows | Fine‑tuned for natural language image edits. |

Prompting guidance (based on official usage patterns)

The official quick‑start example for Z-Image‑Turbo uses 8‑step inference and guidance scale 0.0. This suggests that the Turbo variant is designed to work without traditional classifier‑free guidance, relying instead on the distilled model’s internal alignment. In practice, prompts should be concise, descriptive, and structured.

A good prompt structure starts with subject and environment, then adds details like lighting, materials, and camera framing. For bilingual text rendering, keep the text short and specify language explicitly. For example, you might request “a clean poster with Chinese and English headings, centered, using a bold sans serif font.” This aligns with the model’s documented strength in bilingual text rendering.

If you need more control over style, consider using the base Z-Image model, which supports stronger guidance and negative prompting. But for speed‑critical pipelines — such as real‑time creative testing or rapid iteration — Z-Image-Turbo is the right choice.

Practical use cases for Z-Image-Turbo

Z-Image-Turbo is best suited to scenarios where speed and responsiveness matter. It works well for rapid visual ideation, product mockup exploration, and real‑time creative testing. Because it can generate high‑quality outputs in just a handful of steps, it is also well matched to workflows that require large volumes of images, such as e‑commerce catalogs or marketing variation testing.

The bilingual text rendering capability makes it a strong candidate for generating posters, signage, and localized promotional assets in English and Chinese. When you need to iterate on multiple versions of a headline or label, the low latency can significantly reduce turnaround time.

Performance and hardware considerations

Official documentation notes that Z-Image-Turbo can run in sub‑second latency on H800 GPUs and is designed to fit within 16GB VRAM devices. This makes it unusually practical for local or edge‑adjacent deployment compared to many other 6B‑parameter models. For teams building latency‑sensitive applications, this hardware profile is a major advantage.

Even with the lightweight footprint, output quality remains competitive. The Turbo model is explicitly described as matching or surpassing leading competitors with only 8 NFEs. That efficiency is the core selling point: it compresses the generation pipeline without heavily sacrificing quality.

For latency‑critical applications, this balance of quality and speed is often the deciding factor when selecting the Turbo variant over the base model.

Limitations and practical cautions

Like all generative image models, Z-Image-Turbo has limitations. Very small text can become distorted, and extremely complex layouts may require multiple attempts. The Turbo distillation prioritizes speed, which means that some fine‑grained details may be less consistent than in slower, higher‑quality models.

For production workflows, you should plan for prompt iteration and visual review. When you need strict compliance with branding or typography, consider using a smaller set of prompt templates and validating outputs before publication.

FAQ

Is Z-Image-Turbo open‑source?

Yes. The official model card lists Z-Image-Turbo under the Apache 2.0 license.

What makes Z-Image-Turbo fast?

It is a distilled version of Z-Image that can generate images with only 8 NFEs and is optimized for sub‑second latency on H800 GPUs.

Does Z-Image-Turbo support text rendering?

Yes. Official documentation highlights bilingual text rendering in English and Chinese.

How does it differ from Z-Image?

Z-Image is the high‑quality base model with broader controllability, while Z-Image-Turbo is optimized for speed and low‑latency generation.